Understanding Frontier LLMs

A very good presentation on providers serving models.

This video is a massive 38 minutes, but if you’ve ever wondered what the heck is going on back there when you are interacting with one of the top service provider LLMs, this is for you.

Some of this was remedial for me, as a longtime Unix and network operator, but I think it’s quite accessible even if you’re a one laptop/one phone/no coding player trying to get your head around things. The terms are explained starting from square one, you’ll end up with a broad understanding of how the systems work.

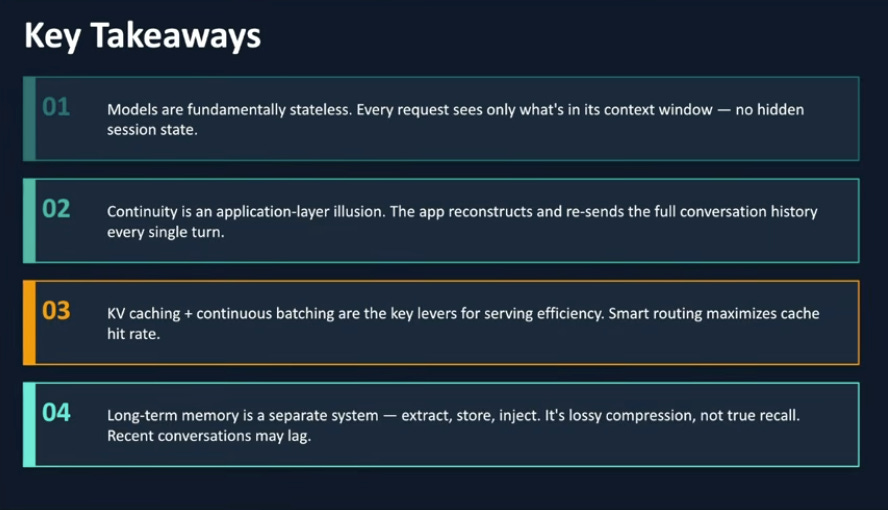

Here are the presenter’s Key Takeaways.

Perplexity:

Here is a prompt I just gave Perplexity.

And this is what Perplexity’s Sonar model got out of it after a few seconds.

LLMs are stateless functions on a GPU cluster, not minds that remember you.

Any sense that the model “knows you” is created by the application layer, not the model.

Your app must reconstruct conversations, extract facts, and manage memory explicitly.

The real “AI” product work starts when you design those memory and serving patterns.

Claude Cowork:

I have been employing Claude Cowork and Perplexity in parallel on tasks like this, observing the differences in their results. I waded through getting Claude to do this so you guys can see the results. This took about 30x as long as Perplexity and several manual interventions because Opus 4.6 was doing at this like a bored 7th grader, messing around in YouTube Help(!) trying to get the transcript. Often Claude will run circles around Perplexity, but not this time.

The Numbers Behind the Magic (1:00) — The raw scale of LLM infrastructure: how many GPUs, how much memory, how many simultaneous users.

Models Are Fundamentally Stateless (34:08) — Every request sees only what’s in the context window. There is no hidden session state — just like most internet services, and for the same reasons.

The Stateless Illusion (33:13) — Continuity is an application-layer trick. Your browser or app reconstructs and resends the full conversation history every single turn. The model has zero memory; the app fakes it.

KV Caching & Continuous Batching (34:58) — The two key levers for serving efficiency at scale. Smart routing maximizes cache hit rate so continuing a conversation lands you on the same backend node.

Memory Architecture Patterns (33:26) — Long-term memory is a completely separate system: extract, store, inject. Four approaches compared — explicit fact extraction, vector database retrieval, summarization chains, and the hybrid approach production systems actually use.

“No Ghost in the Machine” (36:07) — The closing argument: no emerging consciousness, no digital mind. A very large matrix of numbers doing linear algebra at extraordinary speed. The next time someone tells you AGI is around the corner, ask them to explain the KV cache.